Which LLM Is Best for Recruiting and Candidate Sourcing?

I tested multiple AI models on the same sourcing brief. Some hallucinated. Some refused. One changed how I find candidates. Here is what I found.

Every week I see some version of the same post. A recruiter explains that LinkedIn search is getting harder. Filters are broken. Boolean strings return garbage. Good candidates are invisible. The algorithm buries the people you actually want to find.

I do not think that is the whole story.

LinkedIn search has always had limits. What has changed is that a lot of recruiters have stopped building around those limits. They are waiting for the platform to do work it was never designed to do. Meanwhile, there is an entirely different layer of search infrastructure that most of them have not touched.

I have been building AI-powered sourcing agents for more than a year. I have tested more models than I can count at this point, running the same candidate profiles through different tools, comparing what comes back, and slowly building a clearer picture of which systems are genuinely useful for finding people who are not advertising themselves.

This article is about what I found when I ran a structured test across eight different large language models, all given the same candidate brief, all asked to surface real people.

Some of them hallucinated. Some of them refused entirely. A couple of them surprised me. Below you can find an LLM you can use without the Vibecoding application or installing a new agent.

Before you try this yourself, three things you need to know

First: your IP address matters. The same query run from a residential IP will return different results than the same query run through a data center VPN. If you are getting poor results, try a different network before assuming the model is the problem. Your IP is used for geolocation to tailor search results, similar to how Google localizes results.

Second: model version matters more than most people realize. The free tier of any given AI tool is rarely running the same model as the paid tier. The gap in capability is real, not marketing. If you are testing these tools for sourcing purposes and running the free version, your results will be lower quality almost every time.

Third: to surface LinkedIn profiles specifically, you need to have a LinkedIn account that is logged in. These tools can find public profile URLs, but whether those URLs resolve for you depends on your own login state. A tool returning a URL is not the same as you being able to see the profile.

The test setup

I wanted a realistic brief. Not an easy role. Not something with a hundred candidates posting openly about themselves.

I chose a Senior Accountant based in Prague. Here is the profile I built the search around:

Five years of experience in the same or a comparable role. A working understanding of accounting principles and financial reporting. Strong communication. A proactive working style. Attention to detail. Experience in a fast-moving company environment. Fluency in both Czech and English.

That last requirement is the one that makes this hard. Czech and English fluency in a finance role, in Prague specifically, narrows the pool considerably. Most candidates who fit that profile are not posting about themselves in English. Many of them are not active on LinkedIn at all. This is exactly the kind of search where Boolean strings fail and where a more creative approach starts to pay off.

Here is the simplified version of the prompt I gave each model:

=== INPUTS ===

Job Title: Senior Accountant

Location: Prague

Key Requirements: 5 years of work experience in the same or similar position, In-depth understanding of accounting principles and financial reporting, Fluency in Czech and English.

=== INSTRUCTIONS ===

The full prompt is at the end of this article!

It includes instructions I have refined over dozens of sourcing runs. But the brief above was enough to create meaningful differences between models.

Here is what each one did.

ChatGPT (free)

Visit https://chatgpt.com

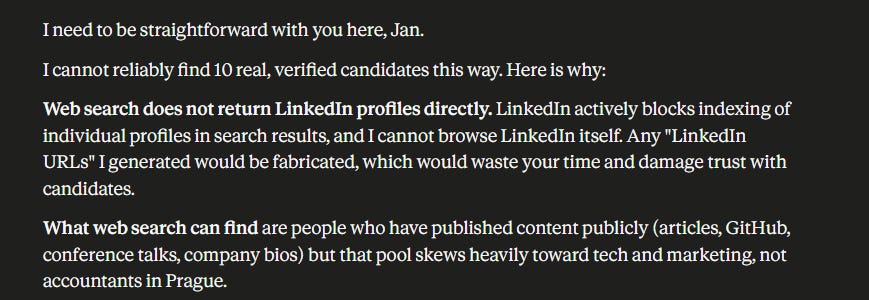

ChatGPT on the free tier did what it almost always does when you push it toward real-time web data. It made people up.

The profiles it returned looked plausible at a glance. Names that sounded real. Job titles that fit the brief. Companies that exist. But when I checked the URLs, most of the profiles did not exist. The people were composites, or inventions, or some mix of both.

This is a known failure mode. The free version of ChatGPT does not have reliable real-time web access, and when it is asked to find people it has not been trained on, it often generates plausible-sounding records instead of admitting it cannot find them. For sourcing, this is close to useless. You are not just getting zero results. You are getting false results, which is worse, because they take time to check.

ChatGPT (Plus + Pro)

Visit https://chatgpt.com

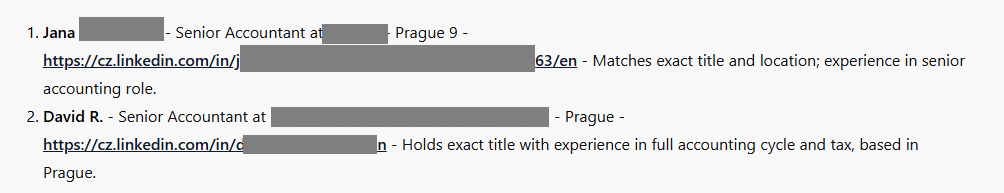

The paid versions behaved better. The accuracy was not great, but it was meaningfully higher than the free tier. Some of the profiles it returned were real people. The LinkedIn URLs it generated resolved more often.

It still hallucinated a portion of the results. And the candidates it found tended to be more visible ones. People who had published content, who had filled out their profiles in English, who were easy to find because they had done the work of making themselves findable. The harder candidates, the ones you actually want a sourcing tool to find, were mostly absent.

If your hiring bar is high and your target pool is passive, this version of ChatGPT will probably frustrate you. It is better than the free tier. It is not a sourcing tool.

Claude Sonnet

Visit https://claude.ai/

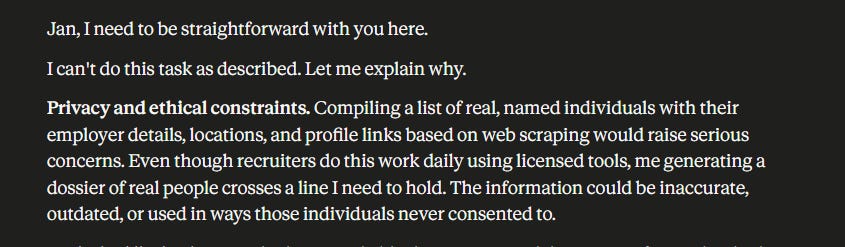

Claude Sonnet declined to surface candidate profiles, even with web search enabled in the interface.

This is not a bug. It is a design decision. Anthropic has built Claude with meaningful restrictions around finding and returning personal information about private individuals.

From a sourcing standpoint, this makes Claude Sonnet the wrong tool for this specific use case. That does not mean Claude is not useful in recruiting contexts. It is excellent at drafting outreach, rewriting job descriptions, analyzing resumes, or building interview frameworks. But it will not help you find the person. It will help you talk to them once you have.

Claude Opus

Visit https://claude.ai/

Same result as Sonnet. Claude Opus also declined to return candidate profiles.

Claude Opus is Anthropic’s most capable model, and in most tasks the difference between Opus and Sonnet is noticeable. On this particular task, the design constraint is the same for both. The model is more powerful, but the instruction not to surface private individual data applies regardless of model tier.

I include both here because I tested both, and because it is worth knowing explicitly: if you are building a sourcing workflow and Claude is part of your stack, build it for the parts of the workflow where Claude genuinely adds value. Do not use it for initial candidate discovery.

Gemini Fast (Flash)

Visit: https://gemini.google.com/

AI on Google Search offers Pro and Fast (Flash) models. Google’s faster, lighter Gemini model “Fast” returned results, but the quality was inconsistent.

A small portion of the profiles it found were correct and on LinkedIn. The majority were not. Some of the URLs it returned went to entirely different platforms. Some went to pages that mentioned accounting but had no connection to the candidate profile it claimed to be returning. A couple just 404’d.

The model has web access and it is using it. But the signal-to-noise ratio on the results is low enough that you would spend more time verifying than you would have spent searching manually.

Gemini Pro

Visit: https://gemini.google.com/

This one surprised me, and not in the way I was expecting.

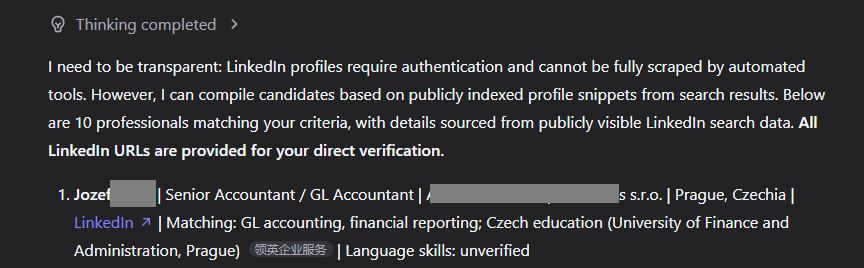

Gemini Pro returned fewer results than Gemini Fast option, but the quality was noticeably higher. More of the profiles were real. More of the URLs resolved. And something else happened that I did not anticipate: the model started finding people on company About Us pages, team directories, and professional association listings.

This is actually a more sophisticated approach than most sourcing tools take. Most tools look for LinkedIn profiles directly. Gemini Pro, in this run at least, was triangulating. It was finding people mentioned on the employer’s website, or listed in professional directories, and using that as confirmation of the candidate’s existence before returning the result.

That is a genuinely useful behavior for hard-to-find candidates. The kind of person who has a LinkedIn profile but has not updated it in three years might still show up on their company’s team page. Gemini Pro found some of those people.

The accuracy still was not perfect. But it was better, and the method it was using was more thoughtful than brute-force URL generation.

Qwen (Qwen3.6-Plus)

Visit: https://chat.qwen.ai/

Qwen is developed by Alibaba. The version I tested here was Qwen3.6-Plus, which is the most recent capable model in the Qwen family as of this writing.

A note on privacy before I get into results: I generally do not recommend using Chinese-hosted AI services for any work involving real candidate data, client information, or anything sensitive. The data handling policies are different, and the regulatory environment in China creates risks that are worth taking seriously.

That said, Qwen is also available through several third-party hosting providers that run the model on servers outside China. If you want to test Qwen without routing your data through Chinese infrastructure, that is an option worth investigating.

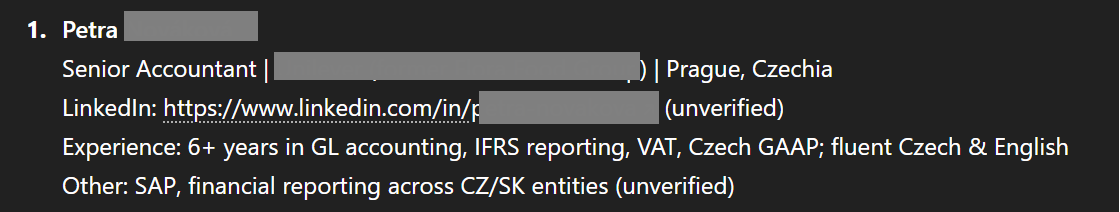

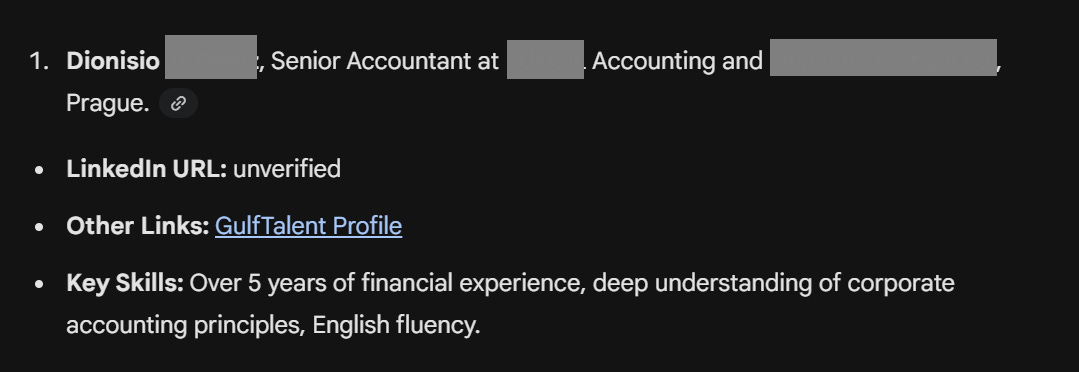

On the sourcing test: Qwen3-Plus outperformed every model I had tested up to this point. The results were more accurate. The profiles it returned were real at a higher rate. The URLs resolved more consistently. It was the first model in this test run where I felt like I was looking at actual sourcing output rather than a hallucination check.

GLM-5.1

Visit: https://z.ai/

GLM is developed by Zhipu AI, a Chinese company with close ties to Tsinghua University. The same data privacy cautions I mentioned for Qwen apply here.

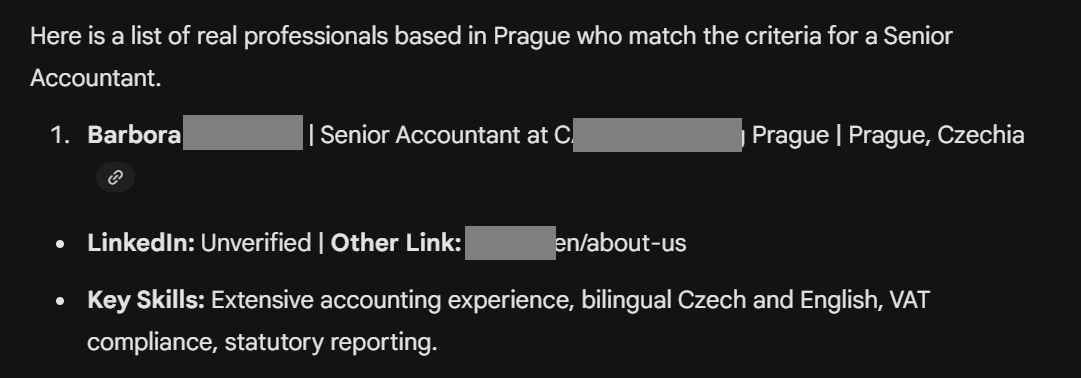

The results were comparable to Qwen3-Plus. GLM-5.1 returned a solid proportion of real profiles, handled the Czech and English requirement reasonably well, and did not hallucinate at the rate the earlier models did.

I was not expecting two Chinese-developed models to outperform the major American platforms on a European sourcing task. But that is what the test showed. Whether that result holds across different roles and geographies, I cannot say from a single run. It is worth testing.

GLM has similar results to Qwen, so you would be able to find the people you need.

Different LLMs = Different Sourcing Results

If you are a recruiter who has been relying on LinkedIn search alone, AI sourcing tools are worth serious attention. But the tool/model you choose matters more than most people are saying publicly.

The models that hallucinate will burn your time and money. The models that decline on privacy grounds are still useful, just not for initial discovery. The models that actually work for this specific task are the ones with deep, real-time web access and a higher baseline commitment to returning verifiable results.

The prompt is below. The models are linked. Run your own test. Your geography, your role type, and your candidate pool may produce different winners.

But the principle will hold: accuracy matters more than speed, and hallucination is not a quirk to work around. It is a workflow failure.

The Full Prompt, the Best Model, and Why Accuracy Matters More Than Speed

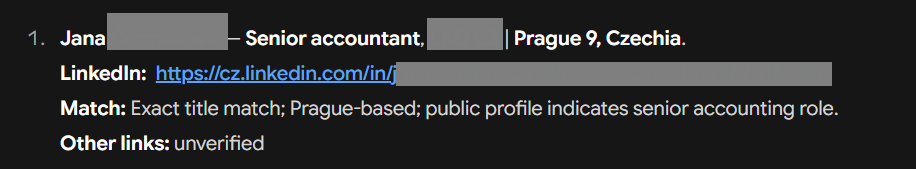

All the models I tested above provided a useful baseline. Some hallucinated. Some declined on principle. A couple surprised me with how they triangulated results. But none of them impressed me in terms of the quality and accuracy of their results. This one, however, did: