Why Viral LinkedIn Posts Are Rarely What They Seem

Everyone told you it was your writing. It wasn't. Some of those posts you've been benchmarking against were never real to begin with.

Your post got 14 likes. Theirs got 1,400. Same topic. Same format. Maybe even worse writing. You probably told yourself it’s the algorithm. Or your follower count. Or that you posted at the wrong time. None of those are the real answer.

I’ve been posting on LinkedIn for years, and even with all my followers, I still can’t crack 1,000 likes reliably for every post, not even 500. So when someone does it every single week, on every single post, I stopped blaming myself and started paying attention to what’s actually happening.

My posts aren’t bad. I know that, as I am constantly asked to ghostwrite for others, I am doing so. And people still interact with those as well, but even good content doesn’t perform like that, not consistently, not on command, not every single day without fail.

I’m not naming anyone. Naming people doesn’t fix the system, but you can already spot those “experts” getting hundreds of likes and comments within 60 minutes of posting. You’ve probably seen their faces and names in those “influencers to follow” posts or the ones that say, “I grew my LinkedIn from 6k to 400k in 12 months.”

What might help is understanding how the system works, so you stop spending money on webinars and reports designed to make you feel like you’re always one framework away from finally breaking through, even when you are not. The main reason I wrote this is simple: stop feeling bad about your content not performing, when the posts you are comparing yourself to are not playing by the same rules.

This article most likely will not perform well. Because the keywords alone will suppress it, but that’s fine. If you found it, I want to give you something more useful than another LinkedIn writing framework: the actual reason the numbers don’t add up, and why you should stop trying to close a gap that was never real.

The Number Nobody Talks About

Let me ask you something first. How often do you get a viral post? Once a year? Once every six months? Every day?

I’ve been posting on LinkedIn for years. In my entire time on LinkedIn, I’ve had exactly one post I’d call truly viral, with around 100,000 likes, more than 10 million views. I remember I was eating lunch at my desk, supposed to be finishing a report, and my phone just kept buzzing. It was a Tuesday. I had a meeting at 2pm I almost missed because I kept refreshing understanding what is going on. That post was a fluke. A coincidence of timing, a topic that hit a nerve, and probably a distribution quirk I’ll never fully understand. I’ve never been able to replicate it, because I was lucky with this one.

I have almost 300,000 followers now. If I’m lucky, every month or two, I get one or two posts that crack 1,000+ likes. Two or three. Per quarter. That’s not a complaint, just how it actually works when you’re posting real content to real people. I post almost every day, not for the likes, but because I enjoy sharing and helping others with my content.

I also ghostwrite a lot for others, for people or companies, so even for them, hitting post with 1000+ likes is not a weekly thing, regardless of whether they have 1K or 500K followers.

So when someone with 100,000-500,000 followers consistently hits 1,000 likes within the first few hours, every single week, EVERY single post, I have a question. Actually, several, but the obvious one first: People with millions of followers don’t reliably pull those numbers. So what’s the difference between them and a LinkedIn “thought leader” with 200K who posts about “morning routines that build executive presence”?

The answer isn’t better copywriting, or a special framework, an AI tool, or posting exactly at 2 pm.

Getting 1,000 likes every single time is not luck. It’s not skill they can teach you. It’s often paid or coordinated (Engagement pod activity). They’ll tell you it’s “organic reach” or that they’re “just supporting their community and fellow content creators.”

These are often the answers I get when I call them out in their comments. They know what they are doing, so let me tell you why they are doing that.

What’s Being Sold and Why the Math Works

I’ll keep this short because I’ve explained it before and the logic doesn’t change.

More followers means higher rates for sponsored posts ($1000-2000 USD per post). More apparent credibility means more speaking invitations. More speaking invitations give you a stage, and a stage gives you legitimacy that nobody checks the source of. Once you’re booked to speak about LinkedIn’s algorithm or writing frameworks, the audience is expecting that you must know how it works. That’s the logic most audiences apply. It’s the logic that the people gaming this system are counting on. This is the best description of “Fake it till you make it”.

The economics are straightforward. Roughly $30 buys 1,000 fake likes, depending on the platform and services. If that fake credibility lets you charge $2,000 for a single sponsored post, or helps you fill 60 seats at a $150 webinar, the return is obvious. You can even sell your LinkedIn viral report of what is working this quarter for $50 or more. If you sell it to enough people, you can fake your engagement for several weeks.

And when you are big enough, you will start getting invitations to be part of the LinkedIn pods to “support” other content creators that are in the same pod.

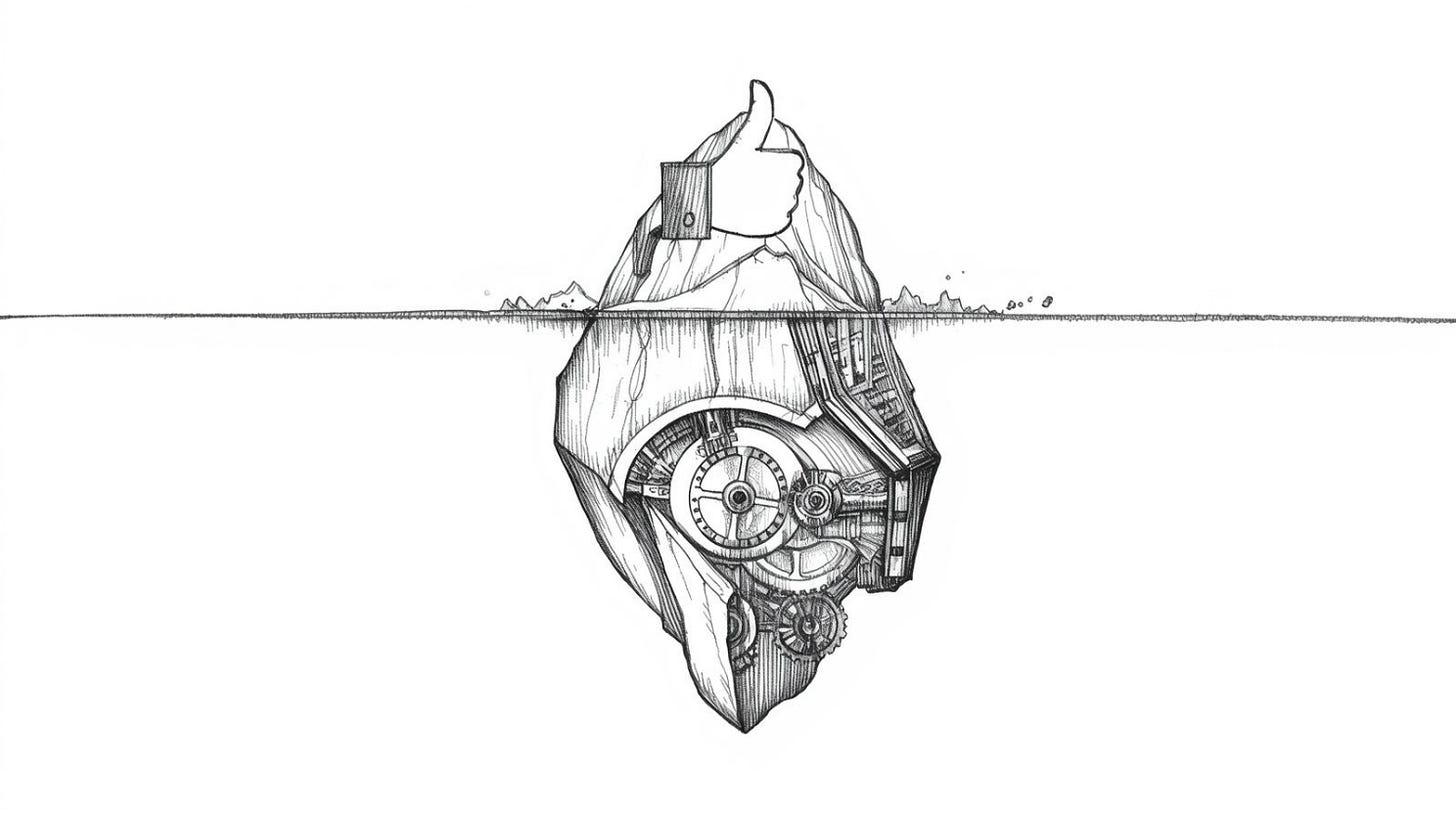

This fake engagement builds visible credibility. Visible credibility gets you into these private engagement pods, the ones where real accounts agree to like and comment on each other’s posts within minutes of publishing. They are using several applications for it, so it’s all automated, which is why their comments and answers are often one or two sentences.

Pod membership keeps your numbers up with slightly more believable activity. That activity gets you noticed by brands looking for influencers with “strong engagement.” Brand deals and speaking fees follow. By the time you’re on a stage talking about LinkedIn strategy, you’ve got third-party validation that makes the original manipulation invisible. You’ll also get social proof from others in the pod. That’s why you often see the same names and faces pop up in your “people you should follow” list.

Some of the loudest voices who publicly claim they “never use pods, only writing frameworks” run joint webinars with people who are members of the largest pods I’ve ever seen documented. Whether that’s deliberate, careless, or something else, I genuinely don’t know. I’ve thought about it a lot, and I don’t have a clean answer, but I think they know and they are OK with it, as this will bring them more followers, deals, and social proof.

What I do know is that it creates a visible performance of expertise that influences who gets hired as consultants, who gets quoted in trade press, and who other people copy their content strategy from. The fake engagement doesn’t just inflate numbers. It distorts people’s understanding of who the actual experts are.

I’m not recommending that anyone buy likes or be part of the pods, as your account could be suspended. I’m just explaining why rational people do it, and why the people who do it are not confused about what they’re doing.

The image above is just one example of a service anyone can use. You can buy likes for your own posts, your friends’ posts, and even your competitors’ posts to hurt them. This is something that is possible on social media sites, so all social sites are fighting with it. And from time to time, they all do an account purge, similar to what Instagram did a couple of days ago.

If one service can offer 6.5 million likes, I wonder how many others are using them or building their own farms. Do the math with me: If LinkedIn has roughly 1.2 billion users, 6.5 million = 0.54% of the entire platform. And that’s quite a lot for one service, and there are dozens of similar services on the market.

Stop Buying Engagement Reports Built on Flawed Data

Over the last few years, I’ve seen dozens of reports, some were one-pagers, others were dozens or even hundreds of pages long. They all talked about algorithms, engagement, or how to make your posts go viral. To be honest, I haven’t read all of them, but the majority I did see had the same qualities, which I’ll get into below.

Every few months, a new “LinkedIn Engagement/Algorithm/Viral Report” lands. Usually priced between $29 and $200, I even saw one for $499. Usually described as “data-driven” or “based on analysis of over one million (or more) posts.” Usually framed as something you need urgently because “the algorithm just changed again and your reach is dropping again.”

And because you’ll get FOMO (Fear of Missing Out) and want to learn the tricks to save your reach, you’ll end up buying another report.

Note: I’ll give those reports credit: they’re excellent at making people feel like they’re about to learn something proprietary, and they create reliable quarterly FOMO that keeps the purchase cycle running. That’s well-executed product design, whatever you think of the underlying research.

The structural problem is the data source. These studies often analyze posts from tools popular among influencers who are already inflating their engagement (paid or via pod activities). Or data that includes a bunch of these posts propped up by LinkedIn pods and bought engagement.

Plus, many influencers in their training recommend these tools they are using to their audiences. Especially, they often get 5 to 20 percent affiliate commissions, and those audience members begin using the tools, meaning their posts also enter the data pool. A person with 840,000+ followers getting $3,000 a month to promote a tool used extensively by LinkedIn pod participants is not a neutral data contributor to a study about “what content performs best organically.”

The data is already compromised before the analysis starts. A study that treats bought likes and pod-coordinated engagement as ‘high-performing content’ is telling you to copy behavior that can only work at scale if you’re also buying likes and using pods. It’s describing the output without the inputs that actually generated it.

There’s a concept from economics that applies here. Goodhart’s Law, developed in the context of UK monetary policy in the 1970s, says that when a measure becomes a target, it ceases to be a good measure. Charles Goodhart was thinking about money supply targets, not LinkedIn pods, but the principle is exact. Once “likes in the first hour” becomes the metric everyone is trying to hit, likes in the first hour stop telling you anything real about content quality or genuine audience interest.

So when those reports say posts with a specific character count get “25% higher reach” or that a particular image format drives “30% more comments,” the useful follow-up question is: 25% more than what baseline, for whose audience, in which industry, in which country, with what follower count? The numbers sound precise because precision signals credibility. Precision without a defined comparison group is not data. It’s aesthetics.

One more thing on this, and then I’ll move past it: the only people who actually know how LinkedIn’s algorithm works are the engineers who built it and have access to the data, and they don’t sell webinars. Everyone else, including me right now, is pattern-matching on incomplete information and calling it insight.

Fake Engagement Doesn’t Just Inflate Numbers

This is the part that concerns me more than the money people waste on useless reports and webinars.

When coordinated fake engagement consistently elevates certain posts and certain accounts, it changes what people believe is good content. Someone new to LinkedIn sees that posts formatted a certain way, by certain types of accounts, reliably get thousands of likes. They conclude that format is what works. They conclude those accounts are the experts worth learning from. They copy the style.

They’re copying the surface of something whose actual engine is invisible to them.

I’ve seen this pattern in recruiting specifically, which is the world I work in. People copy posting styles from LinkedIn “recruiting influencers” who have large, engagement-inflated audiences, and then wonder why the same format gets them 40 likes instead of 2,000. The gap isn’t copywriting. The gap is that one of those people is operating with a coordinated support system and the other isn’t.

A randomized experiment published in Science in 2013 by Muchnik, Aral, and Taylor found that artificially adding early positive votes to online comments caused those comments to receive significantly more subsequent organic positive votes, a 25% increase in final ratings. The content didn’t change. The early social signal did. The researchers called it “social influence bias,” and the finding was specific enough to be uncomfortable: even a single early fake upvote measurably distorted what real audiences later chose to engage with.

What that means practically: buying fake early engagement or using LinkedIn pods doesn’t just inflate a single post’s metrics. It trains the real algorithm to show that post to more people, which generates real secondary engagement, which further validates the post in the system, which later gets cited in someone’s algorithm report as proof that a certain posting style works. The original manipulation becomes invisible by the time it appears in the research.

That’s not a conspiracy. It’s just how feedback loops work when the measurement is corrupted at the start.

What Actually Moves Real People

Human psychology is pretty consistent. I’m fairly confident about this next part, not because of any algorithmic data, but because I’ve spent enough time observing my own posts, posts I’ve ghostwritten, and others’ content to form some solid opinions.

Anger generates comments. Not performed, structured anger, but the kind that makes people feel like something is wrong or unfair. Posts that challenge a belief the audience holds strongly, or that describe something people find irritating in professional life. AI-generated content in professional contexts is hitting this right now. People see a clearly AI-written post and rush to criticize it, which gives those posts enormous reach regardless of the quality of the content or the criticism.

We’re going to see a lot more of this: people creating fake posts with AI-generated images to get attention. These kinds of posts are designed to make people angry and start arguments with others who have different opinions.

Humor generates likes. Simple humor. The kind where the punchline is in the image or the last line. My highest-performing organic posts were almost always jokes. I’m not a naturally funny writer, which is part of why I find this annoying to report, but I’ve accepted it as a real pattern. My Friday jokes were some of my top-performing LinkedIn posts.

Faces stop scrolls. This one is actually well-documented. Researchers at Georgia Tech and Yahoo Labs analyzed 1.1 million Instagram photos and found that images with human faces are 38% more likely to receive likes and 32% more likely to attract comments than photos without faces. Number of faces, age, and gender didn’t move the needle. Just the presence of a face did. The mechanism: faces bypass normal inhibitory processing. Your brain doesn’t decide to look at a face. It just does. And social media posts with faces in them tend to get more likes.

Strong first two lines (hook) matter more than most other factors. Once someone stops scrolling, you have about two seconds. If the third line doesn’t reward the stop, they’re gone and your post gets marked as low-engagement content before most of your audience ever sees it.

Personal stories with specific failures outperform advice posts consistently, in my experience. “Here’s what I learned” gets ignored. “Here’s what I got wrong and what it actually cost me” gets read. I don’t have a clean explanation for why this is still surprising to people who’ve been told it repeatedly. Maybe because the failure-first format requires more vulnerability than most professionals are comfortable with, and so people keep defaulting to the advice format even knowing it underperforms.

Consistency matters, but I’m genuinely less certain about this one than the others. I’ve watched people post daily for a year and gain almost nothing. I’ve watched other people post once a week and grow steadily. I think consistency correlates with the things that actually work (Formatting, readability, etc.) rather than being a cause itself, but I can’t prove that from what I’ve seen.

The Algorithm Panic Machine

There are other things that influence reach. Timing matters somewhat. Replying to comments in the first hour matters more than most people realize. These things have been the same for years: if you’re active, the algorithm will reward you. But it’s not just about having good quality content; you also need a bit of luck.

Course/Webinar/Report creators who capitalize on algorithm updates need that information to go out of date so they can sell you the “new” secret. This cycle of creating panic and then selling the solution is their actual product. It’s fueled by that quarterly FOMO you feel when everyone online starts complaining, “My reach is down!” or “Long posts don’t work anymore!”

Instead of giving in to that FOMO, focus on making your content consistently good. You’ll get much better results with that approach than by chasing every new “hack” that comes along. More creators lose their audience and reach by constantly switching things up than by just staying consistent.

A specific numbers in those reports/webinars can also create a false sense of precision. Research by De Langhe and Puntoni shows that people can misread quantitative metrics and treat them as more informative than they really are, which helps explain why claims like “posts with 1,200-1,400 characters outperform others by 23%” feels more trustworthy than “medium-length posts tend to do better,” even when the underlying evidence for both is equally weak. The number creates a false sense of rigor. The algorithm LinkedIn reports and research know this. The specific percentages are there to trigger trust, not to describe reality accurately.

What actually doesn’t change: being genuinely useful to a specific audience, writing from real experience, and being honest about things you don’t know. Not because it’s inspiring. Because it’s the only approach that doesn’t stop working when the formatting trend cycles.

My friend spent $150 on one of those algorithm reports once, about two years ago and shared that report with me. The report had nice charts. Most of what it told me described things I already did, with more confidence and better graphic design. I didn’t feel cheated exactly. More like I’d paid for a PDF that confirmed I wasn’t completely wrong, which is a different category of waste. The really expensive part wasn’t the $150. It was the two hours I spent reading something that mostly just told me what I wanted to hear.

One Last Thing

Your post isn’t broken. You are just playing a game where some of the other players have a card you can’t see, and the scoreboard doesn’t distinguish between how people got their points. That’s it. That’s the whole thing.

The frustration you feel when your carefully written post gets 18 likes while someone’s recycled or even fake observation gets 1,400 is not feedback about your writing. It’s the natural result of competing in a measured environment where a portion of the measurements are purchased. You’re not losing. You’re just not cheating.

I’ve watched genuinely smart people, quietly conclude that they must not be good enough at this. They study the top performers. They copy the formats. They buy the reports. They attend the webinars, sometimes run by the very people whose engagement is fake, and they walk away with frameworks and hook templates and posting schedules, none of which close the gap, because the gap was never about frameworks. They do this for months. Some of them do it for years. And the whole time the answer was sitting right there in plain sight: the people they were benchmarking against were not benchmarking against reality.

That’s what actually bothers me about this. Not the fake likes. Not the money changing hands. The thing that bothers me is what it does to real people’s confidence. Someone writes something honest, specific, and useful, it gets 30 likes, and they decide they’re not a good writer. Meanwhile, a manufactured post about “Anthropic shipped Claude Design yesterday.” gets 1,500+ coordinated reactions and becomes evidence of what good content looks like. The signal is inverted. The people learning from that signal are walking in the wrong direction, and nobody stops to tell them the compass is broken.

So post the thing you actually want to say. Write it the way you actually think. Accept that the number it gets will be an honest number, which means it will probably be smaller than the numbers you see around you, because the numbers around you are not honest. That’s a hard thing to sit with. I’m not going to pretend it gets easier. But it’s the only version of this that doesn’t require you to become someone who manufactures their own credibility and then sells courses on how they earned it.

The ones who faked it are still faking it. Still refreshing. Still buying. Still lying awake doing math on whether this month’s pod activity justified the cost, still quietly terrified that the day they stop paying is the day everyone finds out. That is not influence. That is debt, the kind you pay with your integrity in monthly installments, and the interest never stops compounding.

You don’t owe this or any other platform a performance. You don’t owe an algorithm your self-respect. The 30 people who read your post and felt something real, who saved it, who sent it to a colleague, who thought about it on the drive home, those people are your actual audience. They found you without being coordinated into it. That is rarer than any number on a screen, and it is worth more than everything being sold in every LinkedIn webinar running right now combined.

Build the real thing. Slowly, honestly, without buying the shortcut. Because the shortcut doesn’t go where they told you it goes.

No Pods, No Purchases: What I Actually Do

Most advice about LinkedIn reach treats it like a math problem. Post at the right time, use the right hook structure, hit the right character count. You’ve read that version. This isn’t that.

What follows is what I actually use. Not frameworks. Not a system I’m selling. Just three things that have consistently made a difference for me, explained honestly, including the parts that feel counterintuitive or slightly embarrassing to admit.