You Don’t Have a Sourcing Tool Problem

Before buying another sourcing tool, run this diagnostic. Most sourcing problems aren't tool problems. Here's how to find out what's actually broken.

I’ve watched recruiters (my friends) buy the same category of tool three times in four years and be surprised each time it didn’t work.

Not bad recruiters. Not lazy ones. People who cared, who were genuinely trying to solve a real problem, who read the case studies and watched the demo and believed the thing. And then six months later they’re back in the same conversation, just with a different vendor logo on the slide deck.

The pattern is almost mechanical at this point. Req opens. Pipeline is thin. Hiring manager is unhappy. Someone in leadership asks “do we have the right tools?” which is usually code for “I’d like this to feel solved by next quarter.” A purchase happens. Onboarding happens. And then, slowly, the results look almost identical to before.

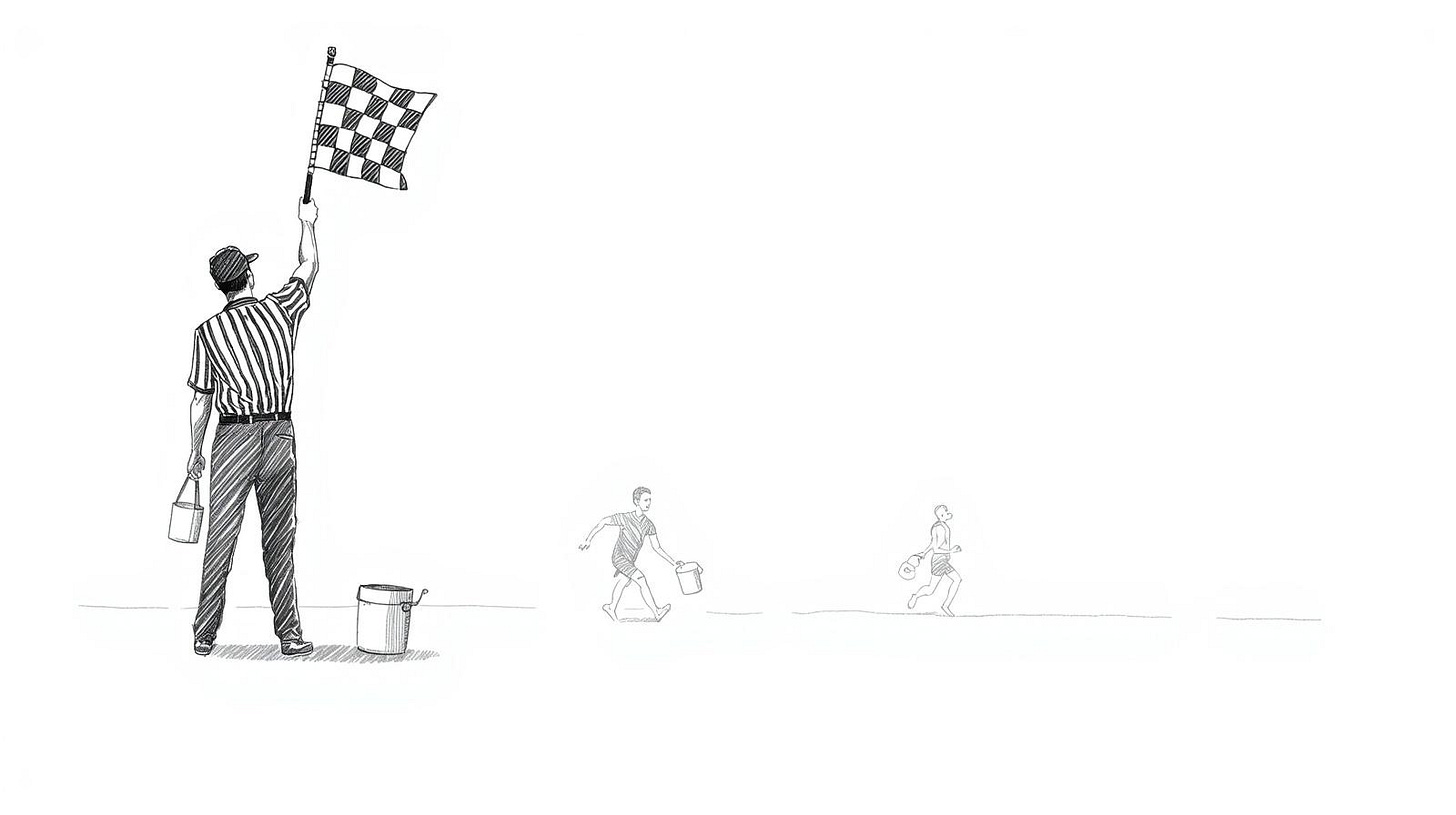

The new platform didn’t fail because it was a bad product. It failed because it was solving the wrong problem. Most sourcing problems aren’t tool problems. They’re targeting problems, messaging problems, process problems, or skill problems. Buying better software to address any of those is a bit like buying a faster car when you’ve been driving to the wrong city, and you haven’t yet noticed you have the wrong map.

I’m not making a case against sourcing tech. Some of it is genuinely useful, I use some of it, and a few specific platforms have changed how I work in ways I wouldn’t give up. But tools are the last thing you should reach for, and almost every team I’ve seen reaches for them first, often within days/weeks of a problem surfacing, before anyone has paused long enough to ask what the problem actually is.

The vendor demo worked exactly as intended

Many recruiting tech vendors are exceptionally good at what they do. Not at sourcing. At selling the idea that their platform solves sourcing.

The demos are built to hit at exactly the right moment. You’re six weeks into a tough req. Your outreach response rates are somewhere between embarrassing and demoralizing. A vendor emails you at 11am on a Tuesday (I always picture this happening on a Tuesday for some reason, maybe because Tuesdays feel like the week has already gotten away from you) and the timing is so good it almost feels personal.

The demo shows a pipeline that practically fills itself. The AI finds candidates you didn’t know existed. The outreach sequences land. The whole thing looks like the problem just disappears.

There’s research from behavioral economics, Robert Cialdini’s work on influence being the most well-known, that documents how urgency and social proof collapse our ability to evaluate a purchase critically. Vendors understand this whether or not they’ve read the research. The demo isn’t a neutral information session; it’s a constructed experience designed to feel like relief, and it works because the person in the room is already in pain and wants to believe relief is one signature away.

Which means the moment you’re most likely to buy a new tool is the moment you’re least equipped to evaluate whether you actually need one. You’re reacting to pain, not diagnosing a problem.

And nobody in that state is stopping to ask the diagnostic questions. Is our targeting actually precise? Are we searching the right populations, or just the most accessible ones? Are our messages converting, and if not, is that a volume problem or a quality problem? Is this a real pipeline issue or is it a job spec that excludes 80% of the qualified market before we’ve sent a single message?

I’ve seen some of my recruiter friends spend $40,000 on a sourcing platform when the actual issue was a job description so over-specified it excluded the vast majority of qualified candidates by design. I’ve seen milder versions of the same thing enough times that it stopped feeling like an edge case.

The trickier thing to admit is that buying a tool is visible. It’s a decision. You can point to it in a meeting and say something happened. Rethinking your search strategy, or rebuilding your outreach approach from scratch, or having a difficult conversation about whether your requirements are realistic, none of that looks like action to anyone watching from a distance. It’s slower, less tangible, and harder to defend when someone asks what you did this quarter to address the pipeline problem.

There’s also something I think about but can’t fully explain: the way vendor demos seem to specifically target the gap between what a recruiter knows is wrong and what they feel capable of fixing on their own. I don’t know if that’s deliberate or just effective product design. Maybe both.

What’s actually upstream

Before the tools is where the real problem lives. Almost always.

The three places I look first when sourcing is underperforming: how the candidate population is being defined before a single search runs, whether outreach is written for the specific person receiving it or for a generalized idea of that person, and whether sourcing is happening continuously or only in response to an open req.

That last one matters more than most people treat it. Most sourcing is still reactive. A req opens, panic starts, sourcing begins. Which means most pipelines are empty by default, and the recruiter is always starting from zero regardless of how long they’ve been in the role. A better sourcing platform doesn’t change that structural problem. It just helps you sprint toward zero a little faster.

There’s research from Bersin by Deloitte, and I’m pulling from memory here so I’d verify before citing this formally, finding that organizations with mature proactive pipelining practices filled roles significantly faster and at lower cost-per-hire than those operating reactively. The mechanism isn’t complicated. If you’ve spent eighteen months building relationships with people in a specific function, and a req opens in that function, you’re not sourcing anymore. You’re calling people you already know. That’s a fundamentally different activity with fundamentally different results.

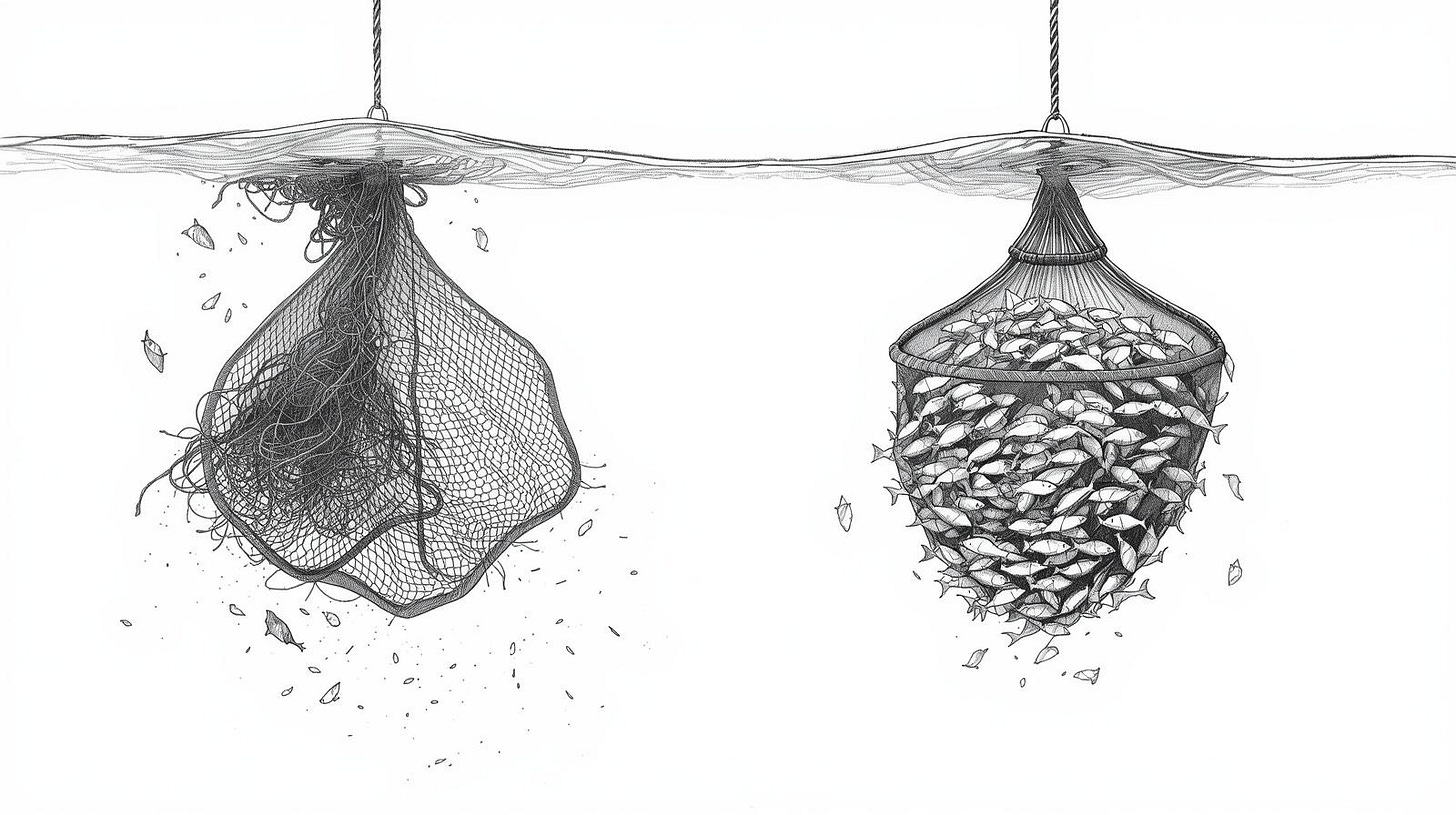

The outreach piece is where I see the most visible dysfunction, partly because I still get contacted regularly, and some messages are almost uniformly bad. Not bad in the sense of being offensive. Bad in the sense of being written by someone who spent eleven seconds on my profile before hitting send. “I came across your background and think you’d be a great fit for an exciting opportunity” contains zero information and zero evidence that I specifically was considered. It could have been sent to anyone with my job title. In many cases it was.

What gets responses, in my own outreach and from what I’ve seen in teams I’ve worked with, is specificity. Not flattery. Actual specificity. You noticed something particular about this person’s career trajectory, or their published work, or a gap between their current role and what they seem to be moving toward. That requires time per message. It doesn’t scale the same way a sequence of generic templates scales. And no sourcing platform resolves that tension, though several of them promise to.

A 2019 analysis by LinkedIn Talent Solutions found that InMail response rates varied by more than 40 percentage points based on message quality, with highly personalized outreach dramatically outperforming generic templates. Same platform. Same tool. Completely different results depending on what the recruiter put into it.

I keep meaning to find good published data on personalization at scale, not just one-off outreach but high-volume campaigns where personalization is partially systematized. I’ve seen internal numbers from a couple of companies but nothing I could cite cleanly or have permission to do.

There is a version of the reactive sourcing problem that is worth naming. Some recruiting teams are so understaffed relative to their req load that proactive pipelining is genuinely impossible, not theoretically impossible but actually impossible given the headcount and the demand. In those situations the tool purchase sometimes is the right call, not because it fixes the strategy problem but because it buys time.

I have complicated feelings about that. It feels like treating the symptom, but sometimes the symptom needs treating while you build the case for more resources. I can’t give clean advice for that situation because it depends on organizational context I can’t see from the outside. What I can say is that if you’re in it, you should at least know that’s what’s happening, and be honest about it internally rather than letting the tool purchase stand in for a capacity conversation that needs to happen.

Boolean isn’t glamorous, and that’s the point

The actual foundation of sourcing is boring. That’s probably why nobody talks about it the way they should, and why it’s so easy to sell a shiny alternative.

Advanced Boolean search, real Boolean and not the watered-down version most ATS interfaces support, is genuinely powerful and genuinely underused. X-ray searching across GitHub, through academic publications, across technical forums and niche communities that have nothing to do with LinkedIn. Building a candidate scoring rubric before you start searching so you actually know what qualified looks like before you start evaluating people against it. These are the skills that separate sourcers who consistently produce from sourcers who are perpetually behind.

None of this is new. Recruiters have been doing Boolean search since roughly the late 1990s. Which is probably part of why it doesn’t feel exciting to bring up in a leadership conversation about improving sourcing outcomes.

I spent about a year early in my career almost entirely avoiding deep Boolean work because it felt tedious and I assumed the incremental improvement wouldn’t justify the time. This was wrong in an embarrassing way. The difference between a mediocre Boolean string and a well-constructed one isn’t 10% better results in the same population. It can be a completely different population.

I built a string once for a niche infrastructure role, probably around 2016, that surfaced sixty-three people who genuinely met the requirements. The previous approach had been returning a large volume of roughly half-relevant results requiring significant manual filtering. The time saved wasn’t in the search itself. It was in everything that came after.

Something that doesn’t get discussed enough is what happens to a sourcing team’s institutional knowledge when they become dependent on a single platform. I’ve seen teams reach a point where nobody could articulate how they would source a specific role without their primary tool. No fallback. No manual process. No understanding of where else the candidates might live. When the contract didn’t renew or the platform changed its data access policies, they were essentially starting from scratch. That fragility is a direct consequence of skipping the fundamentals.

I believe fundamentals can be taught. I’ve seen it work. But I’ve also seen situations where a recruiter had the training, understood the concepts, could articulate the right approach in a conversation, and still produced mediocre sourcing results because the judgment layer, knowing which string to build for which role, wasn’t there yet. How long does that take to develop? I genuinely don’t know. Probably longer than most organizations are willing to invest before losing patience and buying something instead.

Where most escape attempts fail

So you’ve diagnosed the problem. It’s not the tool. It’s targeting, or messaging, or reactive sourcing, or skills that haven’t been developed yet, or some combination. You decide to fix it.

Here’s where it gets harder than the diagnosis suggests.

Most attempts to rebuild sourcing fundamentals fail not because the diagnosis was wrong but because there’s no time protected for doing the work differently. You’re still carrying the same req load. Hiring managers still expect the same update cadence. Nobody told them you’re rebuilding your approach from the ground up, because that conversation is awkward and you’re not confident how it lands. So you try to do both things at once: run the existing system while rebuilding it underneath yourself. That’s genuinely difficult, and most people can’t sustain it past the first hiring spike.

I’ve watched this play out more than a few times. A recruiter or small team gets serious about fixing their fundamentals. They do the training. They start building better search strings. They begin thinking about pipelining as an ongoing activity rather than a panic response. And then a HM gets impatient, or three reqs open in the same week, and they fall back to the old approach because it’s faster even if it produces worse results. The new approach never gets a real test because real conditions never allow for one.

The teams that actually make the shift tend to do one specific thing differently: they protect a block of time for proactive sourcing and treat it like an immovable meeting. Not “I’ll work on pipelining when things slow down” (things don’t slow down) but a specific ninety minutes, mid-week, that doesn’t move for anything below a genuine emergency. Two of those per week. Calendar blocked, marked busy, defended.

This sounds almost insultingly simple. It also works in a way that most more sophisticated interventions don’t, precisely because it’s structural rather than motivational. You’re not relying on discipline or intention in the moment. You’re relying on a calendar entry that already exists.

The cost is real, though. Those blocks create friction. Hiring managers notice when you’re not immediately available. Some of them push back. You have to decide in advance that you’re willing to have that conversation, and not everyone is, and I don’t think there’s a clean answer for what to do if your organization genuinely won’t allow it.

What I’d suggest, before the next vendor conversation, is to actually run a basic diagnostic on your current sourcing. Pull your last three searches and look at the populations you were targeting. Were they genuinely precise, or were you going to the most familiar pool? Look at your last twenty outreach messages and ask honestly how many of them could have been sent to a different person in the same role with zero changes. Look at your response rates and ask whether that number is a tool problem or a message problem.

If that audit is uncomfortable, that discomfort is probably pointing at something real.

The next platform you buy will likely be a competent product. That’s not the issue anymore; most sourcing tools are reasonably well built. The issue is that a competent product applied to an undiagnosed problem still produces the same results you were getting before, and then you’re twelve months older and out the license fee and no closer to understanding what was actually wrong.

Start with the audit. The tool conversation will still be there.

The Sourcing Diagnostic I Run Before Any Tool Decision

Most sourcing diagnostics start with the wrong metric. People pull time-to-fill, or response rates, or pipeline volume, and those numbers tell you something is wrong but not what or where. They’re output metrics. The problem is almost always in the inputs, and the inputs are almost never measured.

What follows is the sequence I actually use when I’m evaluating a sourcing function, to figure out where the real problem is before anyone decides anything about tools, process, or headcount. It’s not elegant and it doesn’t fit on a single slide, and that’s fine.